More journalistic mess-ups in the news today, this time from the Associated Press, which labeled director/producer Costa-Gavras as dead when he is very much alive. Via Alexios Mantzarlis here’s a snapshot of the AP headline on the Washington Post from yesterday (I think?) of a hoax that happened almost a week ago.

How did it happen? Well a person claiming to be the Greek Minister of Culture posted a tweet saying there was breaking news to this effect — here is the account as it looked at the time of the tweet:

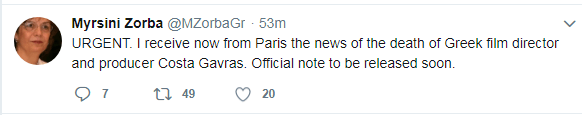

And here is the tweet:

Twenty minutes later this tweet followed:

And soon the handle and picture were changed:

https://twitter.com/TDebNews/status/1035153092826869765

Now in retrospect people always say such things are obvious fakes. Why is this in English, for example? Why was she using this weird badly lit press photo instead of either a personal photo or a government headshot?

But as I say over and over again, this is like changing lanes without checking your mirrors or doing a head check, getting in a collision, and then talking about all the reasons you “should have known the car was there.” Didn’t you think it odd no one had passed you? Didn’t you hear the slight noise of an engine?

None. Of. This. Matters.

I mean, it does matter. But the likely reason that you crashed is not that you didn’t apply your Sherlock powers of deduction. It’s that you didn’t take the 3 seconds to do what you should have.

Stop Reacting and Start Doing the Process

Last week I published an article that takes less than five minutes to read and shows how journalists and citizens can avoid such errors. I don’t mean to be egotistical here, but if you are a working reporter and haven’t read it you should read it.

Here’s an animated gif from that.

See a tweet. Think about retweeting. Look for verification checkmark. See what they are verified as. Three seconds.

Now what if they are not verified? Or if you’ve never heard of the publication they are verified for?

Well then you escalate. You start to pull out the the bigger toolsets: Wikipedia searches, Google News scans on the handle, the kind of thing Bari Weiss missed a while back that led her to publish quotes from a hoax Antifa account in The New York Times. Those ever so slightly more involved procedures look like this:

If that doesn’t work, you escalate further. Maybe get into more esoteric stuff: the Wayback Machine, follower analysis. Or if you are a reporter, you pick up the phone.

It was Sam Wineburg that did me the biggest service when I was writing my textbook for student fact-checkers. He talked about the need to relentlessly simplify (which I was already trying to do, though he pushed me harder). But he also told me who I should read: Gerd Gigerenzer. And that changed a lot.

Gigerenzer’s work deals with risk and uncertainty, and how different professional cultures deal with these issues. What he finds is that successful cultures figure out what the acceptable level of risk is, then design procedures and teach heuristics that take big chunks out of that risk profile while remaining relatively simple. Airline safety culture is a good example of this. How much fuel do you put in a plane? There’s a set of easily quantifiable variables:

- fuel for the trip

- plus a five percent contingency

- plus fuel for landing at an alternate airport if required

- plus 30 minutes holding fuel

- plus a crew-determined buffer for extreme circumstances

(via Gigerenzer, 2014)

That last one is a buffer, but you’ll notice something with the other ones: they don’t really achieve precision: if you were to calculate the fuel needs of each flight you could probably get closer to the proper fuel amount. I know, for example, that your chances of needing 30 minutes holding fuel for landing at Portland (PDX) are probably dramatically lower than your need for it when heading to Philadelphia (PHL). And the five percent contingency is supposed to make up for miscalculations in things like windspeed, but doesn’t account for the fact that different seasons and different flight paths have different levels of weather uncertainty.

The problem is that you can add those things back in, but now you’re reintroducing complexity back into the mix, and complexity pushes people back into error and bias.

That crew-determined buffer is important too, of course. If after all the other factors are accounted for the fuel amount doesn’t seem sufficient due to other factors, the crew can step up and add fuel (but importantly, not subtract). But the rule of thumb doesn’t waste their energy on the basic fuel calculation — they save it for dealing with the exceptions, the weird things that don’t fit the general model, where nuance and expertise matters.

This is a long detour, but the point is that rather than asking people to use individual thinking about dozens of factors around an issue weighted with careful precision, what Gigereezer calls “positive risk” cultures do is decide the acceptable level of risk for a given endeavor, then work together to design simple procedures and heuristics that if followed encode the best insights of the field when applied within the domain. At the end of the procedures there’s the buffer — you’re free to look at the result and think “the heuristic just doesn’t apply here.” But you have to explain yourself, and what’s different here. You apply the heuristic first and think second.

Does the AP Have a Digital Verification Process?

There’s another important piece about defining set processes: they can be enforced in a way that a general “be careful” can’t. We can start to enforce them as norms.

What is the AP process to source a tweet? Ideally, it would be some sort of short tree process — look for a blue checkmark. If found, do X, if not, do Y. The process would quickly sift out the vast majority of safe calls (positive or negative) in seconds.

That quick sifting is important, but just as important is the accountability it provides. Instead of looking at an error like this and discussing whether it was an acceptable level of error, we can start with the question “Was the process followed?” The nature of risk — as Gigerenzer reminds us — is that if you make no errors your system is broken, because you are sacrificing opportunity. So we shouldn’t be punishing people that just happen to be caught on the wrong end of a desirable fail rate.

But if a reporter risked the reputation of your organization because they didn’t follow a defined 5-second protocol — well that’s different. That should have consequences. These sorts of protocols exist elsewhere in journalism. Journalists aren’t accountable for lies sources tell them, but they are accountable for not following proper procedure around confirming veracity, seeking rebuttals, and pushing on source motivation.

Again, this isn’t meant to treat the heuristics or procedures as hard and fast laws. Occasionally procedures produce results so absurd you have to throw the rule book out for a bit. Experts do develop intuitions that sometimes outperform procedures. But the rule of thumb has to be the starting point, and expertise has to make a strong argument against applying it (or for applying a competing rule of thumb). And such “expert” deviations are clearly not what we are seeing here.

What Zeynep Said and Other Things

This is a wandering post, and it’s kind of meant to be — jotting down ideas I’ve been talking about here a while but haven’t pooled together (see here for one of my earlier attempts to bring Gigerenzer into it).

But there’s so much more and I have to post, and not all of it is about journalism. Some of it, unsurprisingly, is about education.

A couple threads I’ll just tack on here.

First, I have been meaning to write a post on Zeynep Tufekci’s NYT op-ed on Musk’s Thailand cave idiocy. Here’s the lead-up:

The Silicon Valley model for doing things is a mix of can-do optimism, a faith that expertise in one domain can be transferred seamlessly to another and a preference for rapid, flashy, high-profile action. But what got the kids and their coach out of the cave was a different model: a slower, more methodical, more narrowly specialized approach to problems, one that has turned many risky enterprises into safe endeavors — commercial airline travel, for example, or rock climbing, both of which have extensive protocols and safety procedures that have taken years to develop.

And here’s the killer graf:

This “safety culture” model is neither stilted nor uncreative. On the contrary, deep expertise, lengthy training and the ability to learn from experience (and to incorporate the lessons of those experiences into future practices) is a valuable form of ingenuity.

Zeynep is exactly right here, but I’d argue that while academia has escaped some of the worst beliefs of Silicon Valley we still often worship this idea of domain independent critical thinking. And part of it is because we devalue more narrowly contextualized knowledge. And a big part of it is that we devalue relevant *professional* knowledge as insufficiently abstract or insufficiently precise. We laugh at rules of thumb as fine for the proles but not for us with our peer-reviewed level of certainty.

But, as Zeynep argues, the process of looking at what competent people do and then encoding that expert knowledge into processes that novices can use is not only a deeply creative process itself, but it forms the foundation on which our students will practice their own creativity. And if we could get away from the idea of our students as professors in training for a bit, we could see that maybe?

Also — I’ll talk about later is how rules of thumb relate to getting past the idea that we seek certainty. We don’t. We seek a certain level of risk, and the two things are very different. Thinking in terms of rules of thumb and 10 second protocols not only protects against error, but it prevents the conspiratorial and cynical spiral that asking for academic levels of precision from novices can produce.

And finally — that “norm” thing about journalists? It’s true for your Mom too. Telling your Mom she’s posting falsehoods is probably not nearly as effective as telling her the expectation is she does a 30 second verification process before posting. When norms are clear (don’t cut in line) they are more enforceable than when they are vague (don’t be a dick at the grocery store). Part of the reason for teaching short techniques instead of more fuzzy “thinking about texts” is expecting a source lookup on a tweet is socially enforceable in a way a ten point list of things to think about is not.

OK, sorry for the mess of a post. Usually after I write a trainwreck of a thing like this I find a more focused way to say it later. But thanks for sticking with this until the end. 🙂

Leave a comment