I can’t make it to MisInfoCon, unfortunately, or the #fakenewssci conference going on right now on the East Coast (can we get a few West Coast misinformation conferences please?) But I thought I’d offer my take on a frame for the problem of misinformation on the web.

When you listen to the psychologists talk about misinformation, it can get pretty depressing. They’ll tell you that once people believe a thing, it can be pretty hard to dislodge that belief. And creating new beliefs doesn’t take that much. Some repeated exposure to information (whether true or false) and an emotional frame to view it through does the trick. Easy to catch, hard to cure. In fact, trying to dislodge existing beliefs — even when they are patently ridiculous, like flat-eartherism — often results in a “backfire effect” causing the beliefs to set in deeper.

When you listen to historians talk, it can be pretty uplifting, in a weird “we’re screwed but we always have been” sort of way. Fake news and slanted news has been around since day one of our species. If you believe theorists like Dan Sperber, our reasoning power evolved not to solve problems, but to slant news. So this is nothing new, and maybe our reaction to this is a moral panic.

Both of these takes, though, tend to leave me feeling a bit unsatisfied. And it’s partially because the psychological and historical approach provide insights, but an inappropriate frame for improving the information environment. For me, the appropriate way to think about problems of web-based misinformation is through a public health lens. Through the lens of epidemiology, which looks at the spread of disease.

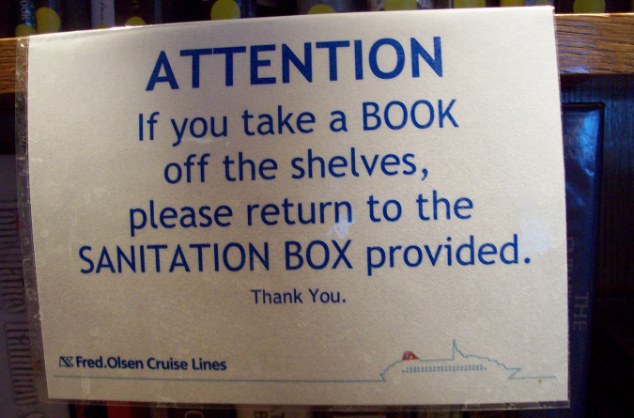

My view is that Misinformation is a stomach bug, one that has existed since the dawn of time in various strains. And the web, it’s a cruise ship. Combine the two things and you get something like this:

BAYONNE, N.J. (AP) — Kim Waite was especially disappointed to fall ill while treating herself to a Caribbean cruise after completing cancer treatment. The London woman thought she was the only sick one as her husband wheeled her to the infirmary — until the elevator doors opened to reveal hundreds of people vomiting into bags, buckets or on the floor, whatever was closest.

“I started crying, I couldn’t believe it,” Waite said. “I was in shock.”

Waite was among nearly 700 passengers and crew members who became ill during a cruise on Royal Caribbean’s Explorer of the Seas. The voyage was cut short and the ship returned to port Wednesday in New Jersey, where it was being sanitized in preparation for its next voyage.

I won’t go too deep into the whole epidemiology of stomach bugs and cruise ships, but let’s start with this. No web cruise looks at a room of 700 passengers with a norovirus and says “Well, they can’t be cured, so nothing can be done.”

There’s absolutely something to be done: prevent the room from having 701 people in it. The primary focus is on the people outside that room.

And yet, when we talk about fact-checking, the assumption is that the main use of such things is to correct people’s beliefs — to “cure” people who are “sick”. It’s not.

Like the Social Web, Cruise Ships Are Viral by Design

A cruise ship is meant to push you closer to people you don’t know, it provides events, buzz, common meals, trivia contests. And that social virality breeds traditional virality.

I’m no cruise ship expert, but if you think about what a cruise ship has to do to deal with a stomach bug outbreak you’ll get a lot further in thinking about web misinformation than if you cling to this idea of fact-checkers as missionaries.

What cruise ships do is try to stop the spread of the virus. And they do that by adopting many approaches at once.

For example:

- They set rules and influence behavior patterns that reduce the spread of the disease.

- They train their crews to identify potential sick passengers earlier, and to act in ways that don’t further the spread.

- They set up isolation rooms.

- They sanitize the ship in between voyages.

And so on. Almost none of this activity deals with curing people who are infected.

Fact-Checks Aren’t a Cure, They’re Prevention

I’m not going to bore you with a point-by-point extended analogy of what disease control measures on a ship map to what web misinformation control strategies. But since I run a student-driven fact-checking project, let me talk about fact checks. Because, again, I hear a lot of people saying “You know, if a person believes something and reads a fact-check they just have their beliefs reinforced.” And while it’s a true and important point, it gets the frame wrong.

Fact-checking isn’t a cure for misinformation. It’s prevention. It’s the hand sanitizer and the sinks around the ship that make it easy to wash your hands before you get infected or infect someone else. It’s information hygiene.

How do fact-checks accomplish this?

- They incentivize news providers and politicians to not make up lies in the first place.

- If news providers and politicians produce lies anyway, an available fact-check can prevent someone from sharing the lie.

- If someone shares the lie, the availability of a fact-check allows a commenter on a post or tweet to shame the user into removing the lie.

- A habit of checking for fact-checks slows sharers and readers down more generally, resulting in less overall virality (and hopefully more reflection).

What fact-checks don’t do is influence true believers. And that’s OK. That’s not the battle we’re fighting.

Regarding my work with the Digital Polarization Initiative, I’ll add that getting students to produce fact-checks is important not only for the fact-checks they produce, but because it builds good information hygiene habits; in the process of producing these things they become a different sort of reader on the web as well, one more prone to use the interactivity of the web to do a quick check on the headlines that rile them up. So an important part of this is changing our orientation to the web from one of discussion (in which retrenchment is the norm) to one of investigation.

What If Your Context Menu Gave You Context?

The larger point is if we want to deal with misinformation on a network, we have to think in network terms. And in network terms, the most important stuff happens before a person adopts a belief. And a lot of things could be done there.

There’s the design of the web environment, for example. Open a browser like Chrome and go to a page and right click into a context menu. The context menu is so-named because it changes based on the context. But what if it gave you context? What if, when you were confronted with an unfamiliar site, instead of a context menu that read like:

- Back

- Forward

- Reload

- Save As…

You got a context menu that said:

- Site Info

- Fact Checks

- Reload

- Save As…

etc., where Site Info produced a custom Google search that compiled a bunch of information from Wikipedia descriptions of the site to WHOIS and date created results, and Fact Checks looked for references to the page in prominent fact-checking platforms?

What if your browser could recognize prominent names, such as “Andrew Wakefield” and highlight them, encouraging people to get a hover card summarizing the work, worldview, and issues around an author or a quote source before the reader took the quote at face value?

I know, I know — there are extensions that can do this. I’ve been working with Jon Udell on just such an extension for the Digital Polarization Initiative. But extensions are good for the short term, but the wrong long-term model. It’s like a cruise ship saying “We’ll give hand sanitizer and sinks to those who request them.” If you want to fix the problem, you put the sanitizer in the hallways, not in the rooms.

If you want to stop disinformation, AI is great. But a more effective idea would be to make the browser (or the Facebook interface) a better tool for investigation.

Once you do that, you start to build a web ecosystem where fact-checking can have real impact.

Ending this abruptly, because, well, work beckons. But let’s think a lot bigger than we have been on this.

Leave a comment