So to review from parts one and two:

- People have a lot of stuff they can share or attend to online.

- In order to efficiently create and process content we look at things like “evidence” through tropes

- Tropes, not narratives or individual claims, are the lynchpin of activism and propaganda, whether true or false, participatory or not. They are more persistent than claims, more compelling than narrative.

- The success of a given trope is often based on its “fit” with the media, events, and data around the event (the “field”). A trope must be compelling but also fit the media available to creators. In participatory propaganda (see Wanless and Berk) tropes must be productive.

- In participatory work, productive tropes are often adopted based on their productivity, and then retconned to a narrative. Narrative-claim fit is far less important than trope-field fit.

- A key indication something is a trope is it can be used across multiple domains — that is they can be used to advance a variety of different claims.

- For example, the Body Count trope was used against the Clintons — but it was also used in anti-vaccine messaging, Kennedy assassination conspiracism, and theorizing about the John Wayne film that purportedly killed its cast.

Today I’m going to talk about how effective fact-checkers of misinformation also think in tropes in order to debunk nonsense, even if they don’t use that language to describe what they do. I’ll also suggest that making that way of thinking more explicit to readers might make readers more equipped to handle novel misinformation.

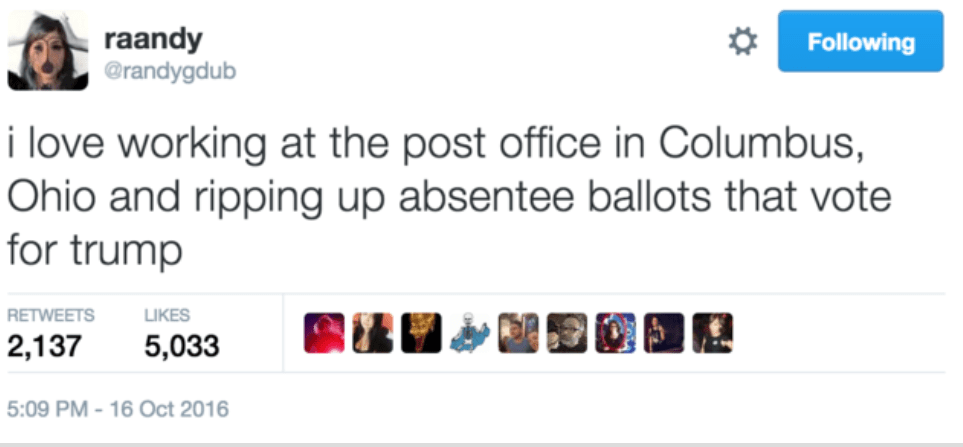

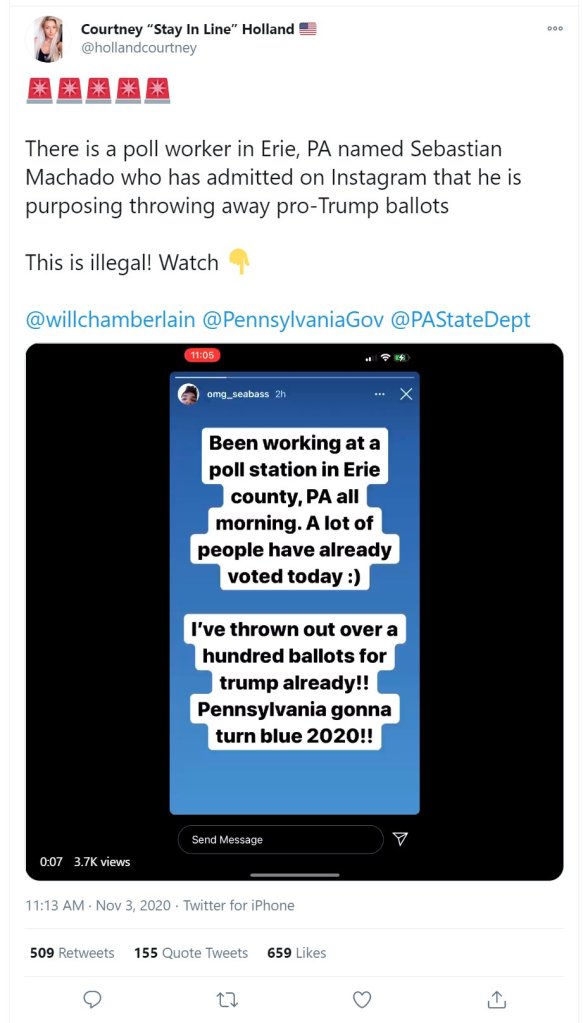

But first I wanted to talk about what happens now in terms of platform interventions and end-user interpretation. During the 2020 election, while working a rapid response effort, this tweet came across my radar. It was pulled up as part of an automated Twitter search I had set up for “throwing” + “ballots”.

I came across this about ten minutes after it was posted, and had a ticket in on it about 5 minutes after that. It was an amazing stroke of luck, really, to come upon it that early. I knew it was going to rack up real numbers, and over the next 45 minutes it did, until it was confirmed with the folks in Erie that this person did not, in fact, work in Erie. They couldn’t be a poll worker because they were not even a registered voter.

During that the period in between discovery and debunking, in the absence of official (dis)confirmation, people tried other things. They looked at the Instagram feed, trying to determine the high school or college the poster came from. Looked for other satirical posts. Tried to message the poster. And so on. This is the process of fact-checkers, and they are good at what they do. At the same time, there was something very strange about it, watching the reshares of the post tick up at astonishing rates while evidence was compiled. Because if you had offered any fact-checker 100 to 1 odds on whether this was fake, they would have taken them. It was obviously fake. Not just because it was unlikely that anyone would advertise a crime of this sort on Instagram. It was likely satire gone wrong because we had seen this happen exactly this way before.

It had happened in 2016, for example, which was why I had my scanner looking for this in the first place. Here it’s a satirical post about a postal worker, but same thing:

This post spiraled out of control, being promoted as an example of open fraud by Gateway Pundit and Rush Limbaugh. Here’s Limbaugh on his program back then:

So you’ve got a postal worker out there admitting he’s opening absentee ballots that have been mailed in and he’s just destroying the ones for Trump. What happens if he opens one up for Hillary, gotta reseal it? I guess they don’t care, what does it matter, as long as it says Hillary on it, what do the Democrats care where it came from? It could be postmarked Mars and they’ll take it.

(as transcribed by Know Your Meme)

This isn’t a case of a bit of misunderstanding blown out of proportion. It was a big misunderstanding in 2016. People really believed this, and generated so many outraged calls that the Post Office (I guess in some foreshadowing of what would happen in 2020) had to issue a statement:

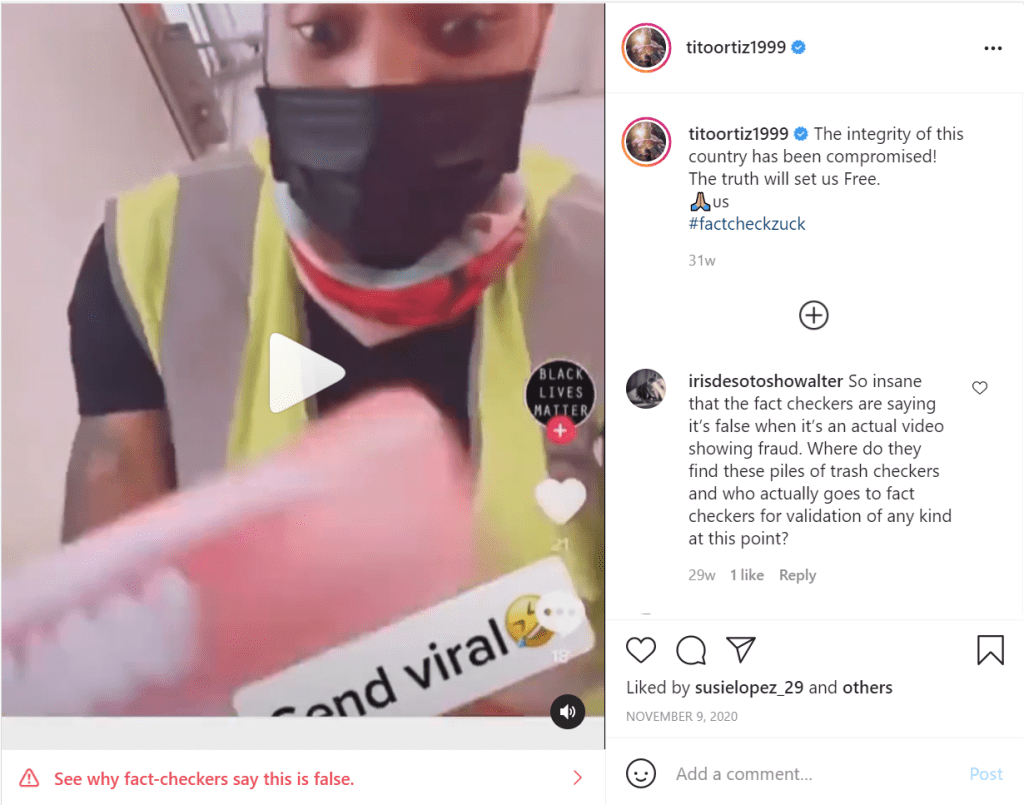

Back to 2020 and the “poll worker throws away ballots”. A week after I found this, it would happen in a TikTok video, again — satire/trolling showing a “poll worker” throwing out ballots shared as fact (here reproduced on Instagram) and again, shared to hundreds of thousands of people (maybe millions?) as true.

As far as tropes, satire/trolling of this type is an interesting case (as is completely faked news) as it requires no “field” really, since it is completely fabricated. But it does require a knowledge of the existing tropes. As we mentioned in the first installment of this, the “public official discards ballots” trope is well established, especially in conservative circles, and when certain partisans see an embodiment of that trope via a tweet or a video they immediately comprehend it, share it, etc. The skids are greased and the trolling slides right through the system.

But my larger point is this — in many cases, even though fact-checkers go through the steps debunk something but they already have really solid plausibility judgments about whether it is likely to be fake. And it’s not necessarily because they are better critical thinkers, or have an encyclopedic knowledge of election oversight procedures. It’s because they have seen the same trope before, over and over, and know the ways in which it is likely to come up empty.

Body Double

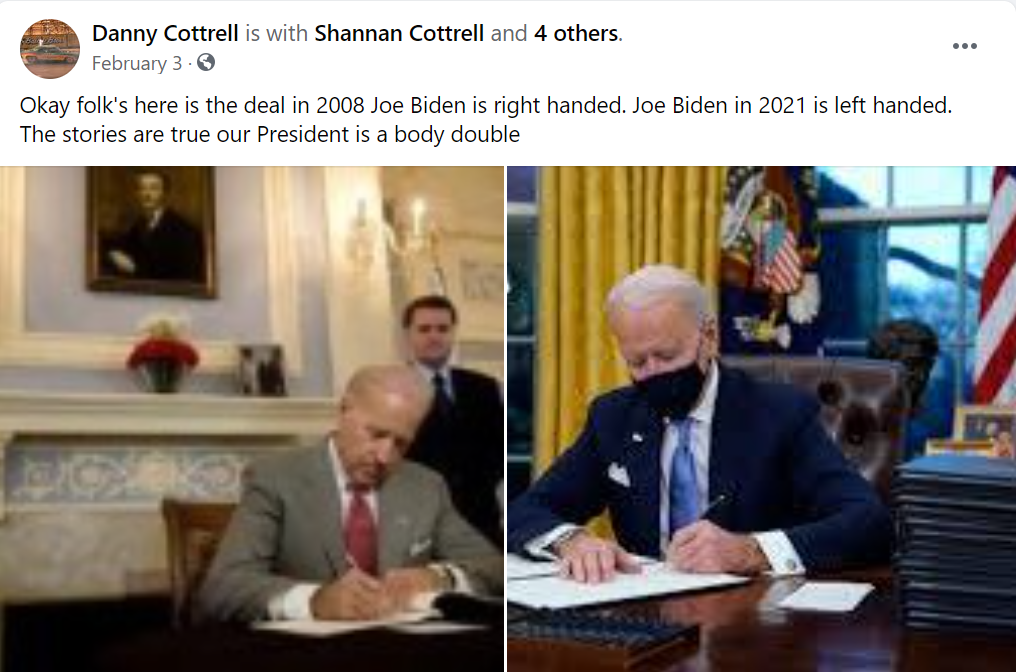

Let me give a somewhat ridiculous example. Perhaps you are a person who is blessed enough to not know the theory that Joe Biden has been replaced by a Body Double. (Yes, it’s a QAnon thing).

Here’s a picture that’s “evidence”. Joe Biden used to be left-handed, and now he’s right handed.

I’m sure it wouldn’t take you more than 20 seconds to figure out what’s going on here, but the average fact-checker has solved this before they’ve even finished reading the claim.

“Picture is reversed,” they say. “Check out how the handkerchief pocket’s on the wrong side.”

Are they Sherlock Holmes reborn, that they can literally process this in under a second?

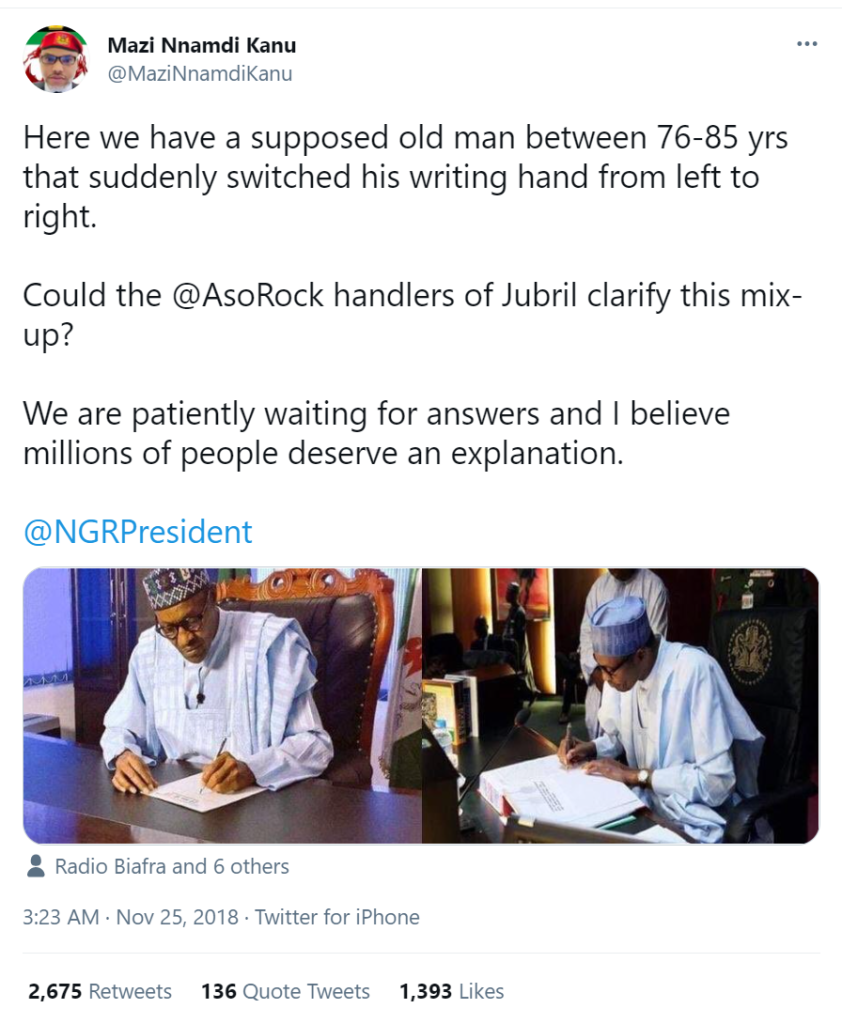

No, of course not. They’ve just seen this trope before, and they know its specific bag of tricks. Here is the same “body double forgets what hand to use!” trope used against the President of Nigeria:

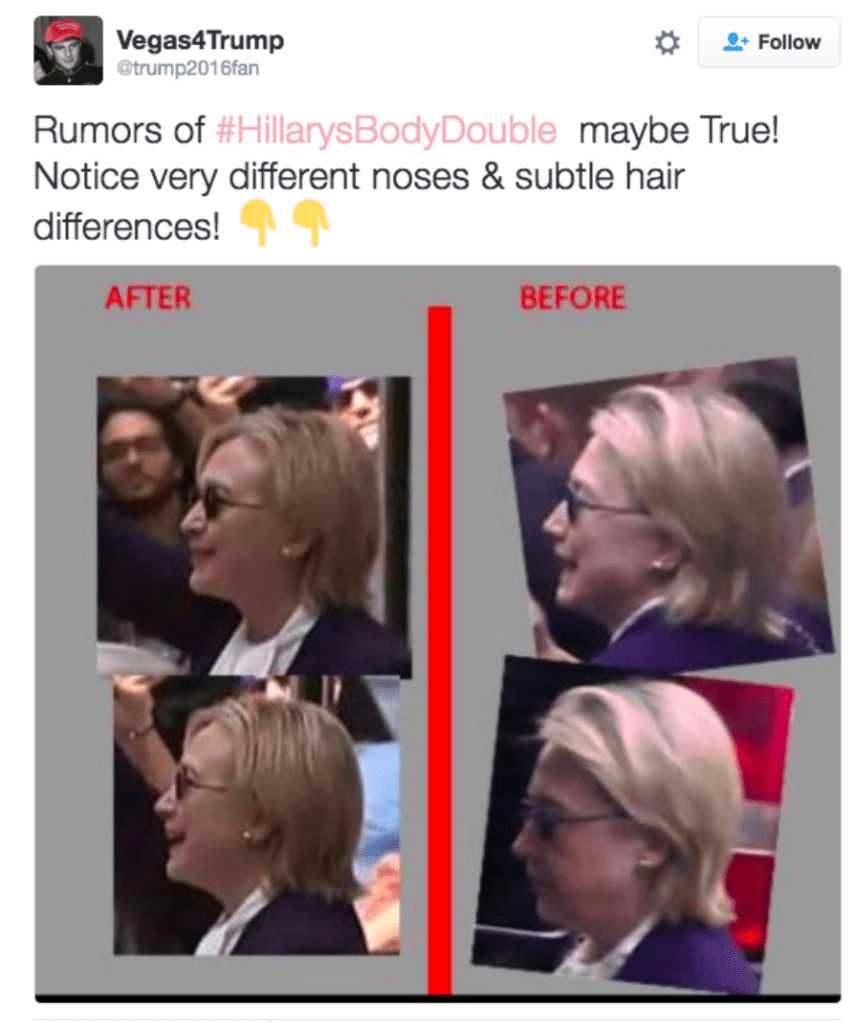

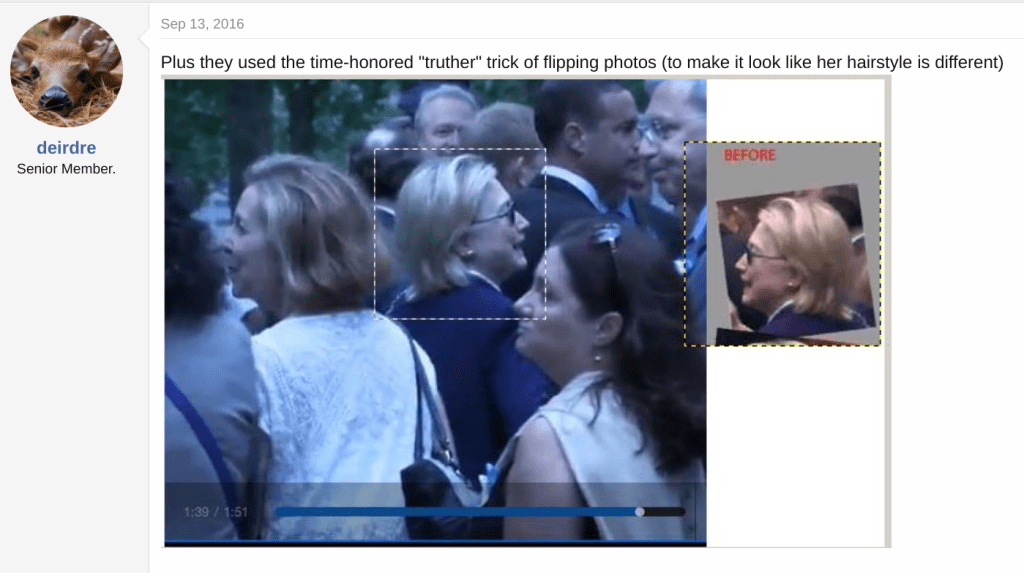

The result after checking? Flipped photograph. And we also saw this in 2016, where pictures of Clinton before and after her collapse at a 9/11 event showed “subtle differences in her hairstyle and face” indicating (supposedly) that it might really be a body double:

So, Body Double? Or…

Yes, it turns out that different sides of your face look different.

This is one — just one — of maybe about two dozen things you keep in mind when looking at instances of the Body Double conspiracy trope. Consider this random bag of big and small issues with comparing photographs, paired with a body double conspiracy where I’ve seen it exploited (not comprehensive by any means, just from memory)

- Camera angle and lighting can make the same person look radically different (Derek Chauvin, too many others to count)

- When a high resolution photo is compared to a low resolution one, a person looks different (Hillary Clinton)

- Women in particular can appear to change height due to footwear (Melania Trump, Hillary Clinton, Avril Lavigne)

- As we grow older our our eye sockets, nose and upper jaw continue to change, and ears lengthen at a rate of a millimeter every four or five years (Paul McCartney, Avril Lavigne, Joe Biden)

- Face-lifts or other surgery can alter the appearance of the earlobe (Joe Biden, President Muhari)

- Skin blemishes sometimes go away, are removed, or airbrushed out (Avril Lavigne)

- Stills from video (especially when photographed on a TV) often create longer or rounder faces due to distortion (Derek Chauvin, Melania Trump, Paul McCartney)

- Lefties often use their non-dominant hand for certain tasks (Paul McCartney)

An average person might be able to come to all these through a rational process, but for a fact-checker familiar with the trope and its more popular instantiations it’s likely far more automatic. In fact, you can see that the two sides of this essentially work the same list. The conspiracist goes down the mental list looking for signs of changed height, shifts in dominant hand, changes to the shape of the ears, photos where the face seems longer or shorter and sees if any of these differences can be found in the “field” of photos available. Those things are surfaced, wrapped in the trope, and disseminated. Then the fact-checker goes through the same mental list but from the other side of things: looking at the way the different types of “evidence” associated with this trope (earlobes, dominant hand, etc) tend to pan out. E.g. “Yeah, this is the old Paul McCartney’s ears are different thing — you can’t compare old and young photos for that.” They check everything of course, but they don’t start from scratch, they start from an understanding of the sorts of aspect of the field the trope tends to exploit. The creator uses their knowledge of the trope to construct, and the fact-checker uses their knowledge of the trope to deconstruct.

Plausibility judgments and discourse rules

A bit of a detour, but I promise it will make sense in a minute. As part of a larger argument in his recent book The Knowledge Machine, Michael Strevens points out a bit of a misconception about science. Or perhaps it’s a paradox?

Scientists must argue their ideas without any references to the ways in which those ideas are personally plausible to them, or reference to their opinions of the competence of person arguing against them. The rule is that the argument must be focused on the data, and it has to be that way for science to advance. This discourse norm is what allows science to progress. In order to make my case I have to produce evidence that you can then use to make yours.

The misconception though is this — because the norm does not allow the use of plausibility judgments (e.g. in the absence of evidence, do I think this is true or not?) it is often assumed that the scientific mind is one that relies on a lack of preconception and bias towards any idea. But anyone who has worked in science knows that this is false. A scientist without preconceptions, who does not listen to intuitions based on experience, is a horrible scientist. In fact, while the papers a scientist writes are important to the progress of science, it’s the ability of a scientist to make good judgments before the data exists that forms a lot of their value to the system.

Reading this book with COVID-19 raging, I couldn’t help but see that tension in how things have played out over the past two years. We came to a situation that was truly novel, at least as an instance, where there was no data early on and yet decisions had to be made. And these two visions of scientist value came into conflict. Why? Because of things like this — many scientists, asked “Will the vaccines provide at least a year of immunity?” said “We don’t really know.” (ADDED NOTE: here I am referring to immunity against severe disease). That’s a discourse rules answer that applies one model of a scientist’s value (rely on data) to a public issue. Alternatively, a scientist could say “Everything we know about both vaccines — of any type — and coronaviruses says, yeah, you’ll get a year out of it at least.” That’s another model of the value of scientists — that having seen many instances of things like what we were experiencing, at least in some dimensions, that they could make accurate guesses (at least about certain aspects of this, like the durability of immune responses). And while I know this is a controversial statement, I really do believe that a lot of people died unnecessarily and that institutional trust was eroded unnecessarily because many scientists selected on the wrong vision of value. When data is available, it’s discourse rules time (scientist with no presuppositions). But in the absence of data its the ability of scientists to make plausibility judgments that provides value.

Stepping back we see that this isn’t just a pattern for scientists, but rather for all professionals that must make public cases. The rules are not as strict, of course. But reporters and fact-checkers encounter similar patterns and tensions as scientists. From the discourse norms side of the equation, the fact-checker must take each Body Double charge as unique and specific. Like a scientist they can use their knowledge about how things generally go to make informed guesses as to where to look for answers. But the fact check itself is not a list of those intuitions; it is the result of the investigations, data-driven, spurred by those intuitions. And once the evidence is there, the ability to make this case, from the data itself, forms the value of reporting and fact-checking.

But what about when the data is not there? What about when we are in that pandemic situation where the answer is needed now and the data is going to take a while?

I’d argue that that is where we are with a lot of quickly emerging misinformation. Take the first example that we opened with, the “poll worker in Erie, PA “throwing away ballots”. I found this example on that day because, knowing there is a false claim or two like this every election I set up a process that looked for this term about every 20 minutes. That prediction paid off, and within about 10 minutes of it going up I had spotted it (around 11:23). Even then it was quickly accelerating. But it still had only moderate spread when I screencapped it, and at the time I came across it it was the only instance on Twitter.

It took me about five minutes to write up a report suggesting this was a likely hoax, and then promote it to Level 2, at 11:27:

Around the time I escalated it, about 15 minutes after the initial post, a number of other commentators reposted it using their own crop of it.

By the end of the hour, around noon my time, three things had happened. First, reporters and fact-checkers had confirmed there was no such worker in Erie, PA. Second, the initial Instagram post had been taken down. Third, one of the initial posts had been retweeted by Donald Trump Jr., and the whole thing had entered uncontrolled spread, as copies of copies of screenshots of Instagram posts circulated the net. And the reactions to this were, well, prescient.

Relevance to Mitigation Efforts

Now, I’m not arguing that content removal should happen on my (or anyone else’s) intuitions. In fact, I’m arguing the opposite. With the dynamics as they are, trying to speed up content removal is really a bit of a fool’s game, at least for certain types of content.

After all, this is an example of everything going right in a rapid response scenario. A piece of fakery so predictable that I set up a program to explicitly scan for it 10 days before it appeared, a discovery of it within minutes of it being posted, a report on it filed within minutes of finding it, an investigation of it which confirmed with folks in Erie that it was indeed fake, within 25 minutes of my report. And yet, none of it mattered.

One reaction — a wrong one — is that such content could be removed on a guess. That is, we know how this trope goes based on plausibility judgments of fact-checkers who have been in this rodeo dozens of times before, even though we don’t know the details of this instance. Is that enough to take it down? No, a thousand times no — that’s not a future anyone wants. Sometimes bad tropes turn out to be true. There’s a trope, for example, that emerges every time there is an explosion somewhere which claims that really it wasn’t an explosion, it was a missile. This trope has a history so bad it’s almost comical — conspiracy theorists would have you believe Flight TWA 800 didn’t explode, it was hit by a missile, the Pentagon wasn’t hit by a plane on 9/11, it was hit with a missile, the factory that recently exploded in Beruit didn’t explode it was hit by a missile, the RV in Nashville last Christmas, of which we have video from half a dozen directions and a crater under its smoking remains didn’t explode it was (you guessed it) hit by a missile.

But what about MH17, the flight that exploded over Ukraine, and was revealed pretty quickly to have been shot down — by a missile? Sometimes the trope proves true. Just as in this pandemic many times the past was a good guide for scientists to make guesses about how things would turn out — but sometimes the past wasn’t a great guide. So we want behavior that is informed by the sort of plausibility context a fact-checker calls to mind when seeing an instance of a trope, but we do not want summary judgments on that.

And here’s where we find perhaps one application of all this theory around a trope-focused approach. Because what if instead of focusing on truth or falsity of content early in a cycle we focused on providing the sort of trope-specific context fact-checkers bring to the table? We don’t have the fact-check yet — but we do have the history of the trope that informs their plausibility judgments. We know for example that this trope of the “ballot-discarding public official” will appear in 2022 and 2024, and that we’ll go through the same pattern of discovering it and taking so long to disconfirm it that any subsequent actions are rendered meaningless. But what if in the meantime you could ask everyone liking it and sharing it to read a short history of the trope, and the ways its been used in the past. If they still want to tweet it after knowing that hey, this is the same scam people fell for three elections in a row, then okay, go ahead.

You would have a set of tropes and subtrope pages, well-maintained that zeroed in not on broad truths but very specific subtropes that are typically associated with misinformation. You think Melania has been replaced by a body double because of a “height change”? OK, fine, but first look at a page that describes how that “the body double is a different height” played out with Paul McCartney, Hillary Clinton, and Avril Lavigne. When the fact-checking does not exist yet, provide the sort of context a fact-checker would start with.

Such an intervention is interesting to me because far from being an impingement on speech, correctly designed it’s a service. Perhaps I see a claim that “COVID-19 was actually a bioweapon” I want to retweet. As I go to do that, I am sent to a page that reminds me that while such a thing is possible the idea that everything from AIDS to Swine Flu was a bioweapon is pretty old, emerges like clockwork, and a lot of the arguments people make for the claim have been debunked in previous iterations. Does this look like some of those instances, or does it look substantially different?

To someone who just wants to put out the propaganda of course, this isn’t much of a deterrent. But to a user who is legitimately trying to process the issue, such context would be welcome. And if those users make better decisions about sharing it, the downward nudge in virality could be an important factor in a multi-prong approach against misinformation.

I also like, of course, that any attempt to spread something like a body double conspiracy or a claim that a debate participant was wired up with a secret headpiece will necessarily lead to a bunch of people learning about the history of the specific trope and perhaps being inoculated against false future instances of it.

I should say that I have been hesitant in the past nine months or so to suggest interventions at all. So many interventions designed to capture blatant mistruths seem to capture unwitting people of good will while the bad actors find ways to skate around them (or game the referees). And lots of good ideas are hampered by poor designs and non-existent support (e.g. Twitter’s ‘appeals’ process, which to all intents and purposes doesn’t actually exist).

But providing end-users “plausibility contexts” around specific (and very granular) tropes seems a much more promising approach than generic and useless “Go to the CDC”-type labels, and potentially much more responsive to emerging misinformation than claim fact-checking and content removal schemes. But it’s going to start with us moving away from larger, ideology bound narratives on the one hand and away from overly specific but slow-to-verify claims on the other. It’s this middle layer in-between the narrative and the claim — the trope — that is both specific enough that is can be targeted and predictable enough that interventions can finally get a step ahead of the game.

OK, that’s it for today — the final installment of this (Part #4) is next and will talk about using pre-bunking around tropes to reduce misinformation around events associated with those tropes.

3 responses to “Tropes and Networked Digital Activism #3: How Fact-Checkers Use Knowledge of Tropes to Fact-Check Quickly (and how you could too)”

[…] After you read this you may want to read Part 2 and Part 3. […]

[…] Part 2 of a series. Follows Part 1. Followed by Part 3. […]

[…] From Tropes and Networked Digital Activism #3: How Fact-Checkers Use Knowledge of Tropes to Fact-Check Qu… […]