I signed up for the CBC Chatbot that teaches you about misinformation. The interface was surprisingly nice — it felt less overwhelming than the typical course stuff I work with. So kudos on that.

On the down side it’s likely to make people worse, not better, at spotting dodgy Facebook pages.

Why? Because — like a lot of reporters, frankly — they’ve taken “fake news” [sigh] to be this narrow 2016 frame of “Pretending to be a known media company when you’re not”. And that results in this advice:

What does “legitimate” mean here? I assume to the people that wrote the course it means that the account is not being spoofed, that it really is the organization that it purports to be. This is, in turn, based on the 2016 disinformation pattern where there were some very popular sites and pages pretending to be organizations that they were not (e.g. the famous fake local newspapers).

There’s two problems with this. First — this method of disinformation is relatively minor nowadays. I still do a prompt or two on it in my classes, but find that there are almost no current examples out there of this that are reaching viral status. I was talking to Kristy Roschke at News Co/Lab last week, and she was saying the same thing. As a teacher and curriculum designer, at first you’re like, “Wow, it’s getting hard to find new examples of this to put in the course!” And then, at a certain point, you say — if it’s so hard to find viral examples of this should it still be in the course at all?

The second problem is more serious. Because in solving a problem that increasingly does not exist, the mini-course creators birth a new problem — the belief that the checkmark is a sign of trustworthiness. If there is a checkmark, you know the page is “legitimate” they say. I don’t know if the people who wrote this were educators or not, but a foundational principle of educational theory is it doesn’t matter what you say, it’s what the student hears. And what a student hears here, almost certainly, is that blue checkmarks are trustworthy.

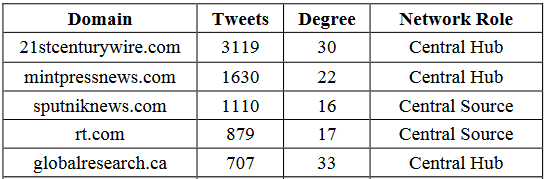

That’s a problem, because the real vectors of disinformation at this point are often blue-checkmarked pages. Here, for example, is the list of central hubs and central sources of conspiracy theorizing about the White Helmets in Syria, from Starbird et al’s paper on the “echo-sytem” (I’ve edited the list to only include central sources and hubs for orgs where there is a Facebook page).

It’s a little difficult to explain this clearly and precisely, but let’s just say the above domains are part of a network of sites by which certain types disinformation is propagated. The people running these sites have various levels of intent around that: obviously, RT is considered by most experts in the area to be a propaganda arm of the Russian government (and in particular supports Putin’s agenda and interest). Same with Sputnik. The others may be involved for more idealistic reasons, but what the work of Starbird et. al. shows is that in practice they uncritically reprint the stories introduced by the Russian entities with only minor alterations, and as a result become major vectors of disinformation.

In 2019, these “echo-systems” are far more likely to be the source of disinformation than a spoofed CBC page (This was likely the case in 2016 too, but at least spoofing was in the running). But what do we find when we apply the blue checkmark test to them? Half of them are blue checkmarked:

Am I saying you can never read any of these sites? Of course not. I don’t think it’s a good investment of your time to read Russian or Syrian propaganda and disinformation, and it has bad social effects of course, but you should read what you want.

From the online media literacy standpoint, however, a media literate person would not read these sites without understanding the way in which they are very, very different than the CBC, Reuters, or The Wall Street Journal. Focusing on the blue-checkmark first has the potential to mislead a new generation of people about that, the way that focusing on dot orgs misled the last.

It’s not just state actors that win in a “Trust the blue checkmark world”, incidentally. Look through the medical misinformation space and you’ll find plenty of blue checkmarks. And we haven’t even got into the otherside of the problem — the number of pages that are from trustworthy and important sources but don’t have a checkmark, and hence will be discarded out of hand, not just as “unverified organization” but as illegitimate.

Education is Hard

It’s really hard to get this stuff right. To do it in education we run and re-run lessons with students, then assess in ways that allow us to see if students are misconstruing lessons in unanticipated ways.

I learned in an early iteration of our materials, for example, that a way I was talking about organizations caused a very small percentage (less than 2 percent) to walk away with the idea that organizations with bigger budgets were better than those with smaller budgets. That’s not what we said, of course, but it’s what a few students heard. (We were trying to point out that something claiming to be a large professional organization — for example, the American Psychological Association — should normally have a large budget, whereas a professional organization that claimed to speak for an industry but had a budget of $70,000 a year probably wasn’t). So we modified how we presented that, and are hammering out a concept we call commensurabilty (we actually borrowed this idea from the Calling Bullshit course’s discussion of how to think about expansive academic claims and and the reputation of various publication venues).

Early on, we also realized that when we asked if something was a trustworthy source the way we phrased that question didn’t account for the news-genre specificity of trust. (E.g. you might trust your local TV station to report on a shooting, but you probably should not trust it to give you diet advice, as most local stations have no real expertise in that). We changed the way we phrased certain questions “Is this a trustworthy source for this sort of story or claim?”. Then we meshed some discussions with the ACRL framework for information literacy, particularly frame number one: authority is contextual and constructed.

And we did this sort of work repeatedly, both with the students and faculty in the 50+ courses involved in our project and in talking to the people outside our project using the materials. We get there because we are constantly doing formal and informal assessment against authentic prompts, and looking for points of student confusion. We get there because we assess, and can say at the end that we improved student performance on the sorts of tasks they are actually confronted with in the real world.

It’s hard, and it’s a never ending process. But as app after app and mini-course after mini-course rolls out on this stuff, it’s worth asking if the people producing them are approaching them with the same eye towards the true problems we face and the true sources of student confusion. If they aren’t, it is quite possible they are doing more harm than good.

Leave a reply to Sam Cancel reply